# ChatGpt比較:費用、概念、模型與使用介紹

## 如何註冊使用Chatgpt

1. 前往OpenAI chatgpt的網站:https://chat.openai.com/auth/login

2. 點擊sign up按鈕,進入註冊頁面

3. 填寫註冊信息,包括電子郵件地址和密碼或是可以使用Google第三方登入

4. 收完驗證信或是綁定後要輸入姓名生日及手機

## 什麼是Chatgpt?

由預訓練(自督導學習)的gpt(基石模型)微調後繼續訓練增強學習

投影片來源:

[2023機器學習 -李宏毅](https://www.youtube.com/watch?v=yiY4nPOzJEg&list=PLJV_el3uVTsOePyfmkfivYZ7Rqr2nMk3W)

(超推老師的教學,有空可以去聽聽)

:::spoiler ChatGPT?

- ChatGPT 是 OpenAI 針對對話生成式預訓練的 GPT(Generative Pre-trained Transformer)模型家族的其中一員,它是一種基於Transformer模型架構的深度學習模型,目前是基於GPT-3.5模型的大型語言模型。

- ChatGPT 通過在大型文本語料庫上進行無監督的訓練,學習了自然語言的潛在模式和規律,能夠根據輸入的文本生成自然流暢、上下文相關的語言回應,因此被廣泛應用於自然語言處理任務中,例如聊天機器人、問答系統、文本摘要、翻譯等。

>白話文:預訓練:大量餵文本資料

>運用上一句話去接下一句話(文字接龍)

:::

>KEYWORDS:自然語言處理(Natural language processing)、Language modeling(LM)、Deep learning、Transformer architecture、無監督學習

:::spoiler Language modeling(LM)

建立一個模型來預測文本序列中的下一個詞語。

(怎樣算一句人話,如何建立一句人話)

:::

:::spoiler Deep learning

Deep learning 是一種機器學習技術,旨在模仿人類大腦的神經網絡,通過層次化的方式來進行高效的特徵提取和學習。Deep learning 中使用的神經網絡通常由多層神經元組成,稱為深度神經網絡(deep neural network,DNN),因此也被稱為深度學習。

相比於傳統的機器學習技術,Deep learning 的主要優勢在於它能夠自動學習高級抽象特徵,並在處理複雜問題時具有更好的表現能力。例如,在圖像識別問題中,傳統的機器學習算法通常需要人工設計特徵,而 Deep learning 能夠自動學習圖像的特徵,並且在圖像識別準確率上表現更好。

:::

:::spoiler 自然語言處理(Natural language processing)

NLP 的核心技術包括語言分析、語言生成、知識表示和機器學習等。其中,語言分析包括詞法分析、句法分析、語義分析和語用分析等,而語言生成則包括文本生成、語音合成等。知識表示方面,NLP 通常采用符號邏輯和語義網絡等形式進行表示。而機器學習方面,NLP 通常使用深度學習模型,如循環神經網絡(RNN)、卷積神經網絡(CNN)和 Transformer 等。

:::

:::spoiler 無監督學習

是一種機器學習的方法,它是一種無需標籤數據的學習方式,從未經標記的數據中發現潛在的結構、模式和規律。在無監督學習中,算法需要自主地學習,通過探索數據中的相似性和差異性來進行分類、分群、降維等任務,從而發現數據中的潛在結構。

:::

:::spoiler Transformer architecture

主要特點是完全基於注意力機制(self-attention mechanism)來捕捉輸入序列中的關係和依賴關係。相比於傳統的循環神經網絡(RNN)或卷積神經網絡(CNN),Transformer 能夠更好地捕捉長距離依賴關係,並且能夠並行處理輸入序列中的信息,從而大大加快模型的訓練速度。

:::

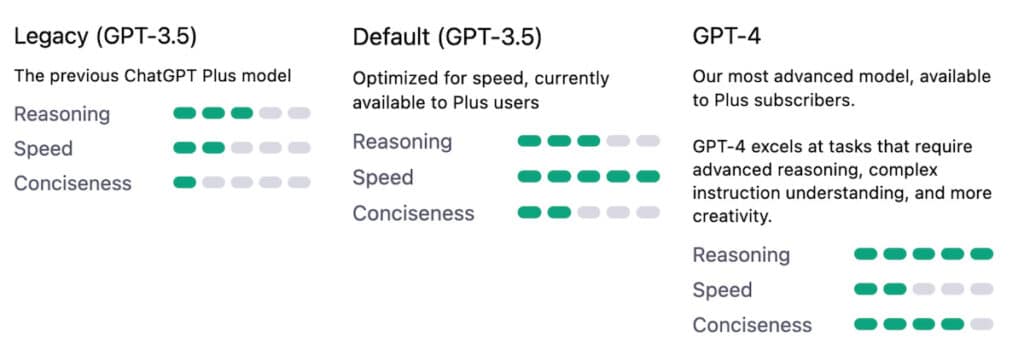

## Chatgpt4 vs Chatgpt 3.5

### 1. 比較表格:

| 項目 | ChatGpt-4 |ChatGpt-3.5|

| ---- | ---- |---|

| Year |2023 |2022|

| Price | $20 usd |Free|

| Trained | 100萬億(100-trillion ) |1750億|

| 資料輸入 | text&images data |only text data|

| 回答長度限制 | 25,000 |700|

| 考試能力 | top 10% | lowest 10%|

| English | proficiency 85% | proficiency 70%|

| Token Limits |8,192 |2,049|

| Prompt |requires lesser context to provide the same answers.|

>圖片來源Key Differences Between GPT-3.5 and GPT-4 | CitiMuzik*

>參考文章:https://appuals.com/gpt-3-5-vs-gpt-4/

### 2. 模型對應詳細價格表(資料製作時間:2023/4)

| Model | Context |Prompt price/1k tokens|Completion price/1K tokens

| ---- | ---- |---|---|

| text-davinci-003|4k |$0.02|$0.02|

| gpt-3.5-turbo | 4k |$0.002|$0.002|

| gpt-4 | 8k |$0.03|$0.06|

| gpt-4-32k | 32k |$0.06|$0.12|

備註:token怎麼算?

| |繁體中文| 英文|

|---|---|---|

|每個字耗費的平均token數|2.03|1.25|

>官方的計算token的測試工具:https://platform.openai.com/tokenizer

## Chatgpt模型原理介紹

### 語言模型(LM):

語言模型就是告訴我們一句話是不是人話。

註:LLM:Large Language Model(大型語言模型)

#### 以學習目標分類的語言模型

- Autoregressive Language Models:當前我們可以以GPT為代表。

- Autoencoder Language Models:以Google(BERT)為代表。

#### 以技術原理區分的語言模型:

##### 1. Statistical Language Models:主要是使用傳統的統計技術, N-Gram, HMM以及部分語言學規則來學習序列的概率分佈。

給定一句話: “I love eating apples.”

N表示我們在建模的時候要看幾個單詞,unigram(1-gram)表示一次就看一個單詞,2-gram (or bigram)表示一次看兩個,以此類推。

- unigram:最簡單最直接的一種建模思路,我們直接統計每個詞出現的頻率,然後作為概率來計算。

- Bigram/Trigram:N-gram語言模型學習的目標是給定一個條件(前序word(s)),給出後面接不同詞語的概率(鍊式法則),對於bigram來說,會這樣建

```

p(I love eating apples) = p(I) * p(love| I) * p(eating| love) * p(apples| eating)

```

:::spoiler python示範

```python

n_gram_sents = [i.strip().split(' ') for i in data_text.strip().split('\n') if i]

# from nltk.corpus import reuters

from nltk import bigrams, trigrams

from collections import Counter, defaultdict

# Create a placeholder for model

model = defaultdict(lambda: defaultdict(lambda: 0))

list(trigrams(n_gram_sents[0], pad_right=True, pad_left=True)) # 观察一下

# Count frequency of co-occurance

# 统计共现的频率。trigrams将sentence变成了三元组。举例来说,The unanimous一起存在的时候,Declaration出现的次数

for sentence in n_gram_sents:

for w1, w2, w3 in trigrams(sentence, pad_right=True, pad_left=True):

model[(w1, w2)][w3] += 1

# Let's transform the counts to probabilities

# 统计分母,举例来说,The unanimous一起存在过多少次,全部加起来就是;后面跟过的每一个词出现的次数,除以The unanimous一起存在的次数即可。

for w1_w2 in model:

total_count = float(sum(model[w1_w2].values()))

for w3 in model[w1_w2]:

model[w1_w2][w3] /= total_count

```

>程式碼來源: https://zhuanlan.zhihu.com/p/608047052

例如:model['in','the']利用這個模型計算in the之後要接什麼詞,defaultdict會返回{'Course':0.25,'Name':0.125}

:::

##### 2. Neural Language Models:主要是使用NN來學習序列的概率分佈。

- Neural Probabilistic Language Mode(NPLM)

利用NN學習了概率語言模型,本身是在優化n-gram的學習問題,輸入是上下文單詞的one-hot編碼或者是對應的詞向量,輸出是下一個單詞的概率分布。NPLM使用的是靜態的詞向量表示。

- Word Embedding:Word2vec: (Mikolov et al. 2013) is a framework for learning word vectors。

- Embedding from Language Models(ELMO)

ELMo 使用深度學習神經網絡來訓練語言模型,該模型可以學習單詞在上下文中的含義和語法。與傳統的詞向量不同,ELMo 的向量是動態生成的,這意味著每個單詞的向量表示會隨著上下文的變化而變化。

### 關於聊天機器人Chatbot

#### 1. 分類:

- 技術分類:

pipeline形式(rasa)vs.end2end形式(chatgpt)

- 對話形式:

單輪(即問答,QA類型) vs. 多輪(可以基於之前的回答,進行當前的回答)

- 任務類型

Task-oriented vs. Open domain

>chatgpt可以定義為是一個多輪對話的end2end open domain對話系統。

#### 2. 學習路徑

模型的學習路徑是這樣的,首先訓練一個好的LM(GPT系列),然後通過Reinforcement Learning from Human Feedback (RLHF)進行訓練,接著構建對話學習chatgpt。

備註:

:::spoiler 微調(Fine-tune)

是指在預訓練好的模型基礎上,進一步針對特定任務進行微調的過程。在機器學習領域中,這個過程通常稱為遷移學習。

在自然語言處理中,預訓練語言模型(如BERT、GPT等)通常使用大規模文本數據進行訓練,以學習單詞和語言結構的含義和規律。這些預訓練模型可以通過微調來適應特定的下遊任務,如文本分類、命名實體識別、語言翻譯等。

在微調過程中,我們會把預訓練模型的參數作為初始值,並通過在特定任務上的反向傳播來微調參數,以最小化損失函數。通過微調,預訓練模型可以學習到任務特定的語言表達方式和特征,從而提高模型在該任務上的性能。

* 反向傳播(Backpropagation)最主要的概念,就是將誤差值往回傳遞資訊,使權重可以利用這樣的資訊進行梯度下降法來更新權重,進一步的降低誤差。

:::

## 使用Chatgpt讀取即時/私人/公司數據並回答相關訊息要怎麼做?

### 1. 直接餵處理好的資料

1.直接爬現有網站的資料web scraping or現有data(例如價格商品介紹等)

2.對資料用python進行處理( chunk embedding等)

3.使chatgpt讀資料並儲存那些詞向量與數據

4.前端使用者輸入問題,連接讀過資料的chatgpt後端

>官方說明文件:https://platform.openai.com/docs/tutorials/web-qa-embeddings

備註:使用開源組合llama+langchain來讀取並處理資料

llama-index操作實例:https://zhuanlan.zhihu.com/p/613155165

langchain, llama-index介紹:[langchain+ llama-index 到底是什麼?](https://hackmd.io/@flora8411/langchain-llama-index)

### 2.Chat plugins

1.可以直接提供定義好的api文件及說明

2.只有ChatGPT Plus 須申請加入waitlist的人可以使用

3.unverified的plugin只能被最多15個人安裝使用

>官方說明文件:https://platform.openai.com/docs/plugins/getting-started

備註:

如果不是提供api文件也可以使用這個:

https://github.com/openai/chatgpt-retrieval-plugin#chatgpt-retrieval-plugin

-----------------------

### 關於第一種方式補充說明:

透過OpenAI的embedding模型和自己的database,先在本地搜索data獲得上下文,然後在調用ChatGPT的API的時候,加上本地數據庫中的相關內容,這樣就可以讓ChatGPT從你自己的數據集獲得了上下文,再結合ChatGPT自己龐大的數據集給出一個更相關的理想結果。

具體解釋一下它的實現原理(參考圖)。

1. 首先準備好要用來學習的文本資料,把它變成CSV或者Json這樣易於處理的格式,並且分成小塊(chunks),每塊不要超過8191個Tokens,因為這是OpenAI embeddings模型的輸入長度限制

2. 然後用一個程序,分批調用OpenAI embedding的API,目前最新的模式是text-embedding-ada-002,將文本塊變成文本向量。

簡單解釋,對於OpenAI來說,要判斷兩段文本的相似度,它需要先將兩段文本變成數字向量(vector embeddings),就像一堆坐標軸數字,然後通過數字比較可以得出一個0-1之間的小數,數字越接近1相似度越高。

3. 需要將轉換後的結果保存到本地數據庫。注意一般的關系型數據庫是不支持這種向量數據的,必須用特別的數據庫,比如Pinecone數據庫,比如Postgres數據庫(需要 pgvector 擴展)。

當然保存的時候,需要把原始的文本塊和數字向量一起存儲,這樣才能根據數字向量反向獲得原始文本。(類似於全文索引中給數據建索引)

(參考圖一從Script到DB的步驟)

4. 等需要搜索的時候,先將你的搜索關鍵字,調用OpenAI embedding的API把關鍵字變成數字向量。

(參考圖一 Search App到OpenAI)

拿到這個數字向量後,再去自己的數據庫進行檢索,那麽就可以得到一個結果集,這個結果集會根據匹配的相似度有個打分,分越高說明越匹配,這樣就可以按照匹配度倒序返回一個相關結果。

(參考圖一 Search App到DB的步驟)

5. 聊天問答的實現要稍微覆雜一點

當用戶提問後,需要先根據提問內容去本地數據庫中搜索到一個相關結果集。

(參考圖一中Chat App到Search App的步驟)

然後根據拿到的結果集,將結果集加入到請求ChatGPT的prompt中。

(參考圖一中Chat App到OpenAI的步驟)

比如說用戶提了一個問題:“What's the makers's schedule?”,從數據庫中檢索到相關的文字段落是:“What I worked on...”和"Taste for Makers...",那麽最終的prompt看起來就像這樣:

```js

[

{

role: "system",

content: "You are a helpful assistant that accurately answers queries using Paul Graham's essays. Use the text provided to form your answer, but avoid copying word-for-word from the essays. Try to use your own words when possible. Keep your answer under 5 sentences. Be accurate, helpful, concise, and clear."

},

{

role: "user",

content: `Use the following passages to provide an answer

to the query: "What's the makers's schedule?"

1. What I worked on...

2. Taste for Makers...`

}

]

```

(LlamaIndex就是實現了這個原理)

>本段落程式碼參考專案:https://github.com/mckaywrigley/paul-graham-gpt

>paul-graham-gpt作者有提供youtube教學影片:https://www.youtube.com/watch?v=RM-v7zoYQo0&t=4085s

>本段落文章參考來源:https://m.weibo.cn/status/4875446737175262

## 其他新誕生的結合的AI project

#### - AgentGPT

https://agentgpt.reworkd.ai/

---> AutoGPT:

將任務分解為多個步驟,然後對每個步驟進行分析和決策的過程可以參考以下步驟:

1. 理解任務:Auto-GPT首先通過自然語言理解技術,將輸入的任務語句轉換為可以處理的結構化數據。

2. 分解任務:Auto-GPT將任務分解成多個步驟。這個過程類似於將大型項目分解成更小的任務或子任務。每個子任務應該是具有明確目標的小步驟。

3. 生成解決方案:對於每個子任務,Auto-GPT將利用其學習到的知識和經驗,生成可以解決該任務的解決方案。解決方案可以是一系列指令、操作或決策。

4. 執行方案:一旦生成了解決方案,Auto-GPT將自動執行指令、操作或決策,以完成指定任務的每個子任務。

5. 監控進度:Auto-GPT還將監控任務執行的進度,並在必要時進行調整。如果存在異常情況或錯誤,它將重新分析任務,並生成新的解決方案,以確保任務完成。

可參考:https://www.zhihu.com/question/595359852

#### - 整理新推出的各種開源專案網站

https://theresanaiforthat.com/timeline/#switch