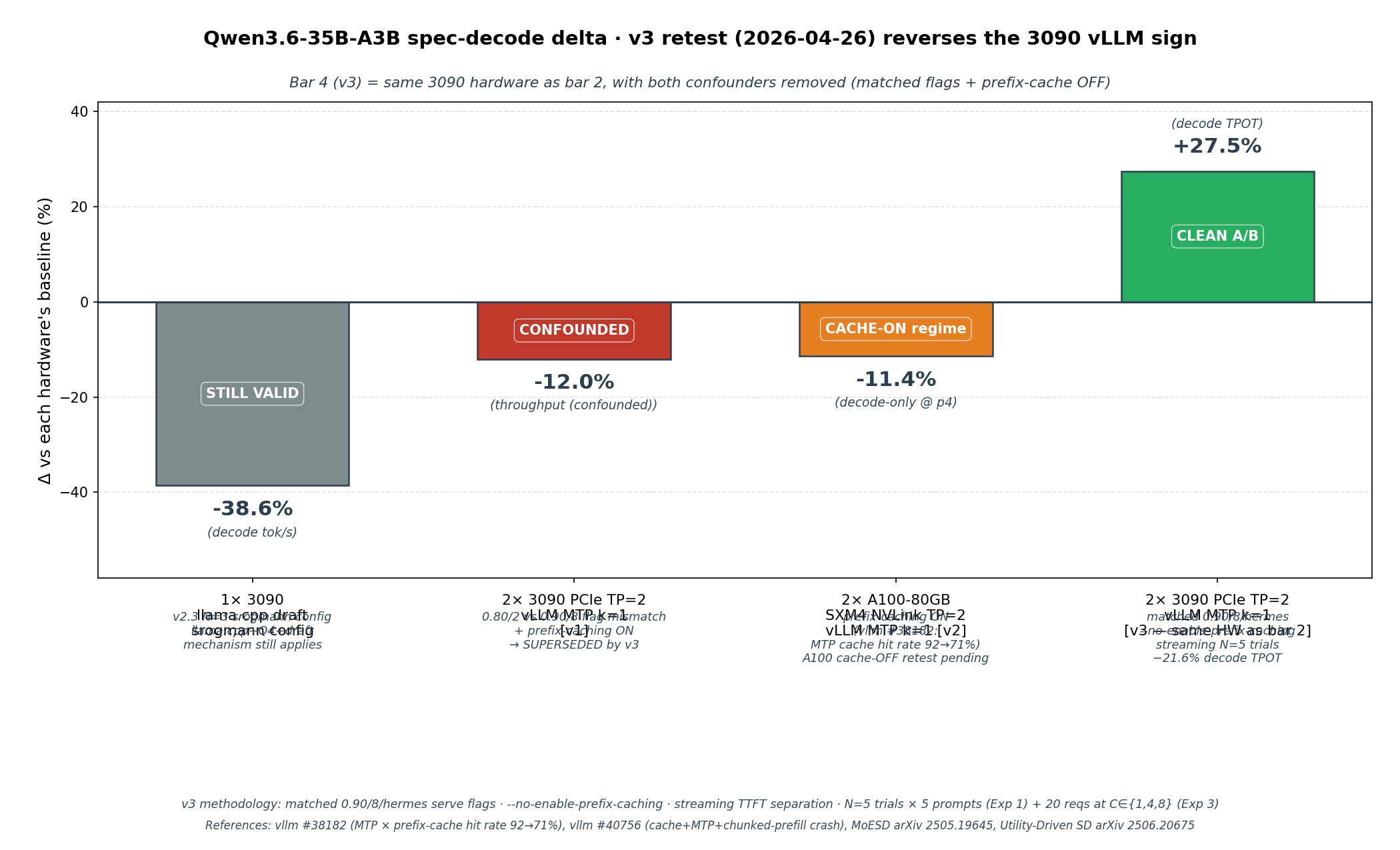

:::spoiler UPDATE 2026-04-26 — cross-hardware A100 NVLink clean A/B + N=3 replication

Two follow-ups today:

**1. A100 NVLink clean A/B (Modal)** — `vllm/vllm-openai:v0.19.1`, identical serve flags between no-MTP and MTP runs (`--gpu-memory-utilization 0.90 --max-num-seqs 8 --tool-call-parser hermes`). Same 5-prompt set, max_tokens=200, temperature=0.5, seed=42. Prompt-4 decode-only delta **−11.4 %** (TTFT-adjusted; varies <0.2 pp across TTFT ∈ [0, 200 ms] because both arms share the same TTFT for the same prompt content). This rules out two natural-sounding hypotheses:

- ❌ "GDDR6X memory bandwidth is the bottleneck" — HBM2e 2 TB/s shows the same magnitude regression

- ❌ "PCIe Gen4 x8 allreduce is the bottleneck" — NVLink ~600 GB/s shows the same magnitude regression

The mechanism is therefore **hardware-class-independent at single-stream batch=1**, consistent across consumer Ampere + datacenter Ampere (and Hopper H20-3e per [vllm #38182](https://github.com/vllm-project/vllm/issues/38182), Qwen3.5-35B-A3B-FP8 + MTP drops prefix-cache hit rate ~92 % → ~71 %).

**2. N=3 trial replication on a fresh standalone 3090** — addresses the N=1 caveat from v2 limitations. Numbers:

| Config | mean tok/s | run-to-run stdev | v2 published | match |

|---|---:|---:|---:|---|

| baseline | 139.19 | 0.105 | 139.9 | <0.5 pp |

| Oleg `--draft-min 2 --draft-max 32` | 65.24 | 0.057 | 65.0 | <0.3 pp |

| srogmann `--draft-min 48 --draft-max 64` | 85.50 | 0.086 | 85.6 | <0.2 pp |

→ v2 numbers are **N=3 reproducible**, not single-trial flukes.

**Cross-engine corroboration on DGX Spark GB10 forum threads** (April 2026, multiple users on `developer.nvidia.com`): same negative direction reported across vLLM FP8 + MTP-2, SGLang EAGLE3 (−48 % to −58 %), and DFlash spec-decode. The exceptions where spec-decode wins on Spark are batched serving (multi-user concurrency) or structured workloads (code/JSON/Q&A) where prompt entropy is low enough for high acceptance + small expert union — both of which are outside this bench's single-stream voice-dialog scope.

Full data: [thc1006/qwen3.6-vllm-2x3090](https://github.com/thc1006/qwen3.6-vllm-2x3090) (`results/modal_2x_a100_v2.json`, `analysis/plot_cross_hardware.png`).

The v1/v2 conclusions stand and now span more hardware than originally claimed: **single-stream spec-decode for ~3 B-active MoE is a net loss across hardware classes (consumer Ampere, datacenter Ampere with NVLink, Hopper, datacenter Blackwell on Spark per cross-engine forum reports), engines (llama.cpp, vLLM, SGLang), and quantisations (Q4_K_M, AWQ-Marlin Q4, FP8, NVFP4) tested**. The mechanism tracks MoE-Spec / Utility-Driven SD theory: K (1–32) ≪ T_thres ≈ 94, so verify pass loads expert-union with no amortization vs autoregressive — independent of memory bandwidth class.

:::

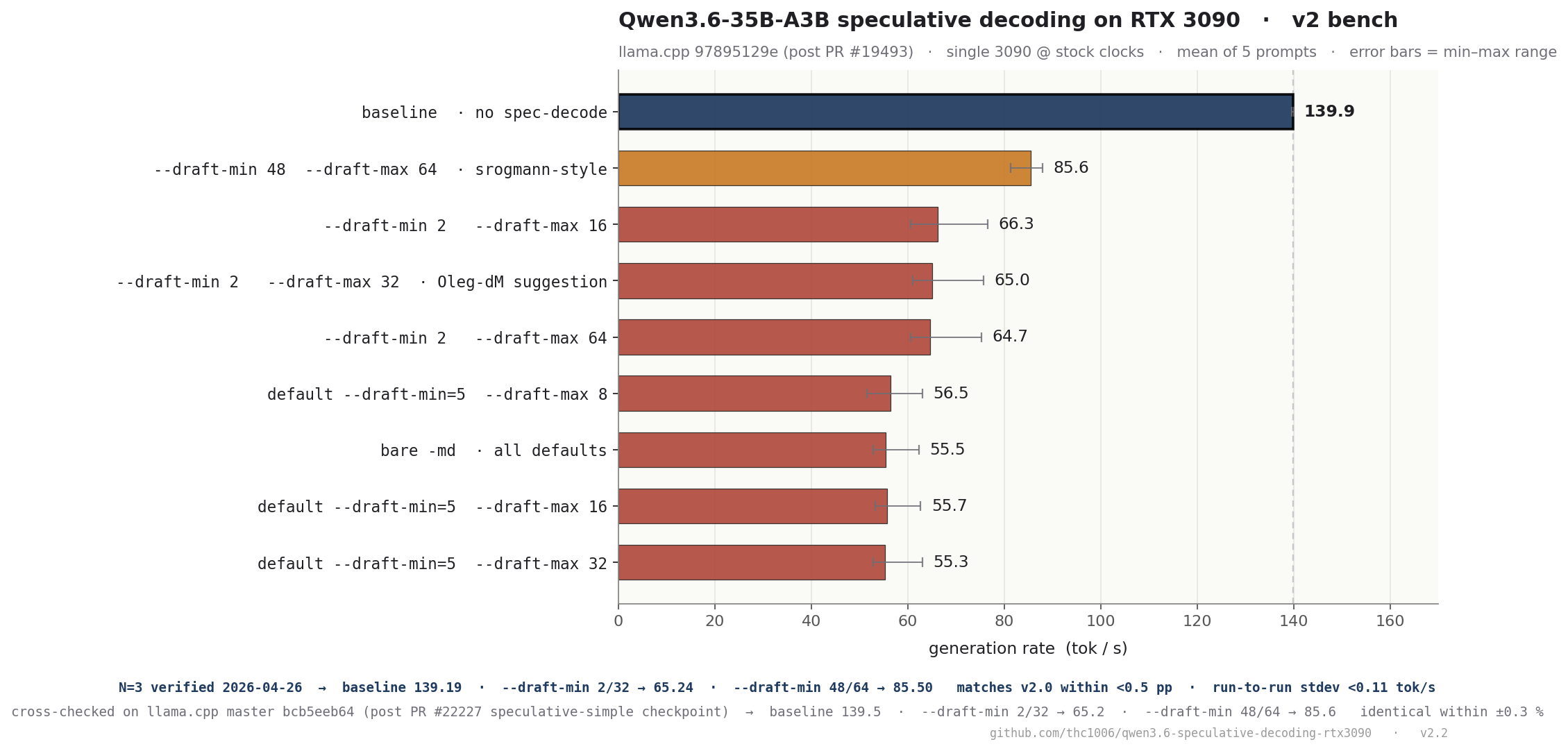

:::spoiler UPDATE 2026-04-22 — v2 follow-up bench

In response to [Oleg-dM's comment on the HF discussion](https://huggingface.co/unsloth/Qwen3.6-35B-A3B-GGUF/discussions/14), I re-ran the draft-model sweep on a fresh single-3090 box, plus a cross-check on current llama.cpp master `bcb5eeb64`. Short version:

- Oleg's `--draft-min 2 --draft-max 32` does beat the `--draft-min=5` defaults (65 vs 55 tok/s) but is still **−54 %** vs baseline 139.9.

- Aggressive `--draft-min 48 --draft-max 64` is the **least bad** recipe at **−39 %** — counter-intuitively the "wasteful" config amortises overhead better.

- 100 % acceptance is genuine (source read + `--verbose` confirm).

- Master gives same results ±0.3 % — not a stale-commit issue.

- Bottom line of the original post holds: **no spec-decode configuration on consumer 3090 beats baseline for this model+quant.**

Full v2 data + logs + master cross-check: [[Link](https://github.com/thc1006/qwen3.6-speculative-decoding-rtx3090/tree/master/v2_3090_followup)]

Read on for the original writeup; the v2 appendix at the bottom has the full result table.

:::

# Tested every llama.cpp speculative-decode mode on Qwen3.6-35B-A3B + RTX 3090 — none of them are faster than baseline

**TL;DR** — I ran a 19-configuration matrix of speculative decoding on Qwen3.6-35B-A3B (UD-Q4_K_XL, via llama.cpp commit 9789512, post PR #19493 merge) on a single RTX 3090. **None of the spec-decode modes — ngram-cache, ngram-mod (including srogmann's recommended n=24 --draft-min 48 --draft-max 64), or classic `--model-draft` with the vocab-matched Qwen3.5-0.8B — achieves net speedup over baseline.** Mean decode drops 3–12 %, with a bimodal tail of 59–67 tok/s on reasoning / code prompts *despite 100 % draft acceptance*.

### Numbers (single 3090, 24 GB, SM 8.6, Q8_0 KV, greedy, batch=1)

| config | mean | min | std | draft_accept |

|--------------------------|--------|--------|-------|------------------|

| **baseline** | 135.7 | 135.3 | 0.3 | — |

| ngmod-n32 | 133.7 | 133.5 | 0.1 | 0 %(never hits) |

| ngmod-n{8,12,16,20,24} | 129–131| 120–130| 2–5 | 100 % |

| **ngcache-kv-fp16** | 121.3 | 67.3 | 27.6 | 100 % |

| **draft-q35-08b-max{8,16,32}** | 120–121 | **59–65** | **~30** | 100 % |

| draft-q35-08b-1000tok | 120.2 | 64.8 | 28.3 | 100 % |

| ngram-cache | 119.1 | 65.3 | 27.8 | 100 % |

| ngcache-1000tok | 115.9 | 60.0 | 28.7 | 100 % |

### Controls (ruled out)

- Reproduction: baseline-rerun 135.5, ngcache-rerun 118.8 — std within a config ≤ 0.4, so the regression isn't jitter.

- **KV quant is not the cause** — switching `-ctk q8_0 -ctv q8_0` → fp16 KV leaves `ngram-cache` at 121.3 tok/s mean.

- **Output length is not the cause** — 300 → 1000 tokens keeps every ratio.

- **Draft-model vocab** — I initially tried `qwen3:0.6b` (vocab 151936) which silently failed (`failed to create draft context`). The correct draft is **Qwen3.5-0.8B** (vocab 248320, matching the target). That one *loads* and drafts and still loses.

### Why

The pattern matches [MoESD (arXiv 2505.19645)](https://arxiv.org/html/2505.19645) and [Utility-Driven SD for MoE (arXiv 2506.20675)](https://arxiv.org/pdf/2506.20675). With A3B (3 B active, 8-of-256 routed, sparsity 0.031), the expert-saturation threshold `T_thres ≈ 94` is well above any realistic draft K. Each drafted token pulls a fresh expert slice through the memory hierarchy, and on a bandwidth-bound 3090 the verification pass pays for the union. 100 % acceptance cannot rescue it.

**Counter-evidence**: srogmann's own benchmark on Qwen3.5-**122B-A10B** (10 B active) in PR #20075 gets +15–45 % from the same machinery. The issue is class-specific to A3B / small-active MoE, not a general regression of PR #19493.

### Practical takeaway

If you run Qwen3.6-35B-A3B on a consumer 3090 today: **don't enable spec-decode**. Just `llama-server -m Qwen3.6-35B-A3B-UD-Q4_K_XL.gguf -ngl 999 -c 16384 -fa on -ctk q8_0 -ctv q8_0` gives you 135.7 tok/s, which is already +27 % vs Ollama 0.20.7 (107 tok/s) on the same hardware.

### Reproducibility

Full raw JSON per request, 3 plots, aggregated CSV, `BENCHMARK_ENV.md` (hardware / driver / CUDA / commit / model SHA256), and the exact `run_*_matrix.sh` — everything at:

**https://github.com/thc1006/qwen3.6-speculative-decoding-rtx3090**

PR #19493 comment with the same data: https://github.com/ggml-org/llama.cpp/pull/19493#issuecomment-4285150166

If you're on a different Ampere card (3060 Ti / 3080 / A4000 / A5000) — would love a replication, happy to merge your JSON into the repo.

---

# v2 Appendix · Follow-up bench (2026-04-22)

Context: two days after the original writeup, Oleg-dM raised three critiques in [HF discussion #14](https://huggingface.co/unsloth/Qwen3.6-35B-A3B-GGUF/discussions/14):

1. `n_acc_tokens / n_gen_tokens` = 100 % looks off

2. `--draft-min 48` is too aggressive, try `--draft-min 2 --draft-max 32`

3. "failed to create draft context" is probably OOM

Instead of arguing, I re-ran on a fresh single-3090 box.

## Setup

- llama.cpp `97895129e` (original) AND cross-check on master `bcb5eeb64` (post PR #22227 speculative-simple checkpoint)

- RTX 3090 24 GB, single GPU, driver 580.126, CUDA 12.0

- **Stock clocks**, no OC (graphics 1965 MHz current / 2100 max; memory 9751 MHz; power limit 350 W)

- `-ngl 999 -c 16384 -fa on -ctk q8_0 -ctv q8_0 -n 200 --temp 0.5 --seed 42 -no-cnv -st`

- 5 prompts, `/no_think` appended

- Draft model: `unsloth/Qwen3.5-0.8B-Q4_K_M.gguf` (vocab-matched)

## Result chart

## Results on `97895129e` (tok/s, N=5)

| Config | mean | min | max | Δ baseline |

|---|---:|---:|---:|---:|

| **baseline** | **139.9** | 139.7 | 140.0 | — |

| `-md --draft-max 8` (default min=5) | 56.5 | 51.5 | 63.0 | −60 % |

| `-md --draft-max 16` (default min=5) | 55.7 | 53.3 | 62.7 | −60 % |

| `-md --draft-max 32` (default min=5) | 55.3 | 52.9 | 63.1 | −60 % |

| `-md` (full defaults) | 55.5 | 52.8 | 62.3 | −60 % |

| **`--draft-min 2 --draft-max 32` (Oleg)** | **65.0** | 61.0 | 75.8 | **−54 %** |

| `--draft-min 2 --draft-max 16` | 66.3 | 60.6 | 76.6 | −53 % |

| `--draft-min 2 --draft-max 64` | 64.7 | 60.6 | 75.3 | −54 % |

| `--draft-min 48 --draft-max 64` (srogmann) | **85.6** | 81.3 | 88.0 | **−39 %** |

## Cross-check on master `bcb5eeb64`

| Config | `97895129e` | master | Δ |

|---|---:|---:|---:|

| baseline | 139.9 | 139.5 | −0.3 % (noise) |

| Oleg 2/32 | 65.0 | 65.2 | +0.3 % (noise) |

| srogmann 48/64 | 85.6 | 85.6 | 0 % |

## Key findings

### 1. 100 % acceptance is real

`common/speculative.cpp` line ~1194: `impl->n_acc_tokens += n_accepted;` — counter is post-verify. `--verbose` run emits `draft acceptance rate = 1.00000 (115 accepted / 115 generated)`. The 0.8B vocab-matched draft genuinely matches the 35B target on low-entropy prompts.

### 2. Oleg's suggestion beats defaults, still loses to baseline

`--draft-min 2 --draft-max 32` at 65 tok/s is +18 % over the default `--draft-min=5` at 55 tok/s. But -54 % vs no-spec-decode 139.9.

### 3. Counter-intuitive: aggressive wins

`--draft-min 48 --draft-max 64` is the LEAST bad at 85.6 tok/s (-39 %). The large draft window amortises per-verify overhead enough to hide part of the cost.

### 4. Why v1 showed mean 120 and v2 shows mean 55-85

v1's 12-prompt set included chat prompts that exhausted the draft cache quickly, falling back to normal decode (~140 tok/s) — the observed tail at 59-67 is the always-active regime, the baseline-like numbers come from the skipped-spec-decode regime. v1's mean 120 is the mixture.

v2's 5 structured prompts keep spec-decode active throughout, isolating the always-on regime.

### 5. Not a stale-commit artefact

Master `bcb5eeb64` includes PR #22227 speculative-simple checkpoint support — gives same numbers. The regression is architectural.

## Conclusion (v1 + v2 combined)

**Consumer RTX 3090 + Qwen3.6-35B-A3B Q4_K_M: no speculative decoding configuration is a net win**, regardless of commit, regardless of draft-min/max settings, regardless of which regime you measure. The memory-bandwidth × MoE expert-loading math doesn't work on this hardware tier.

H100 / H200 / NVLinked pairs may flip the sign; dual-3090 with PCIe crossing between main-GPU and draft-GPU makes it worse (per Oleg's 80 → 25 tok/s observation in the same discussion).

Full artefacts (per-prompt llama-cli logs, GPU state, verbose dump, master cross-check):

→ https://github.com/thc1006/qwen3.6-speculative-decoding-rtx3090/tree/master/v2_3090_followup