<!-- .slide: data-background="https://raw.githubusercontent.com/shawngraham/presentations/gh-pages/viktor-talashuk-bhoj9tHlsiY-unsplash.jpg" -->

<br><br><br>

<section style="text-align: left;">

<h3>

Computational

</h3>

<h3>Ways of Seeing</h3>

<h4><i>but really:</i></h4>

<h2>Ways of <i>Not</i> Seeing</h2>

follow along at <br> https://hackmd.io/@drgraham/not-seeing

</section>

---

Shawn Graham

Carleton U

[@electricarchaeo](https://twitter.com/electricarchaeo)

[bonetrade.github.io](https://bonetrade.github.io)

---

Note:

So much visual material on the web. What's a person to do with all of this? screen essentialism means we have tonnes of visual data to work with. history not good with visual data - certainly not at scale. work of michael kramer, image sonification -> put a pin in that for now; today we'll talk about ways of seeing that are a bit more 'tractable'

My issue: there are tens of thousands of posts of people showing off their collections, or actually soliciting buyers/sellers, of human remains. These remains are of people who, like Abraham Ulrikab, never wanted their bodies treated in this way. Part of what our project is doing is trying to figure out if there are ways machine vision might be used at scale to reassert their humanity. This may or may not be possible, as many of the digital technologies I'm going to show you are themselves the tools of a neo digital colonialism, used to strip humanity.

---

Note:

Seeing the Past with Computers. I'm the contrarian paper in there saying 'you can't see the past' and then I do something with sound instead.

---

[ImagePlot](http://lab.softwarestudies.com/p/imageplot.html)

Lev Manovich [Cultural Analytics, Imageplot](http://lab.softwarestudies.com/p/overview-slides-and-video-articles-why.html)

---

[Imj web toy](http://www.zachwhalen.net/pg/imj/) by Zach Whalen does much the same thing, but with a (necessarily) reduced slate of options

Note:

But how do you deal with things like shape, or visual tropes, or tracking some kind of object or person across multiple visual contexts? This is what I mean by 'not seeing' - there are things in images that only appear when we zoom out and squint. And sometimes, you deal with visual matter that is important to study, but not necessarily something you want to stare at, one image at a time, all day. Like, for instance, the trade in human remains and other 'wet' or 'red market' materials

---

_gif by Alex Mordvintsev_

Neural Network approaches to images

Note:

No doubt you've heard about neural networks. This is how they work. What I like about this is that the process of feeding an image into a neural network creates a hugely multi-dimensional vector (list of numbers) that describe not just the colour, hue, saturation, brightness etc of an image, but also makes it possible to compare that position in vector space against thousands of known images, which permits us to assign labels, create new metadata, and measure distances or similarities

---

## the plan

+ Instagram & its metadata

+ Some tools to grab metadata and/or images

+ PixPlot & other ways of visualizing stuff

+ Scene description

+ Hallucinations

---

## links to notebooks that you can come back to later:

+ [instagram-scraper example](https://colab.research.google.com/drive/1eF76HOujdxkhvYWRAh4ExUaE6uAwq6c-?usp=sharing)

+ [nearest-neighbours to network](https://colab.research.google.com/drive/1EY-AwgcO7jjqwop28X441jMY4MqbYAGE?usp=sharing)

+ [PixPlot](https://colab.research.google.com/drive/1HjiAGMRQSqIlMnu-KF6fd7loL2oFeQDg?usp=sharing)

+ [Azure tagging](https://colab.research.google.com/drive/1bqN_kAespXPsWaUr1wjczCLKIC8UFfHs?usp=sharing)

---

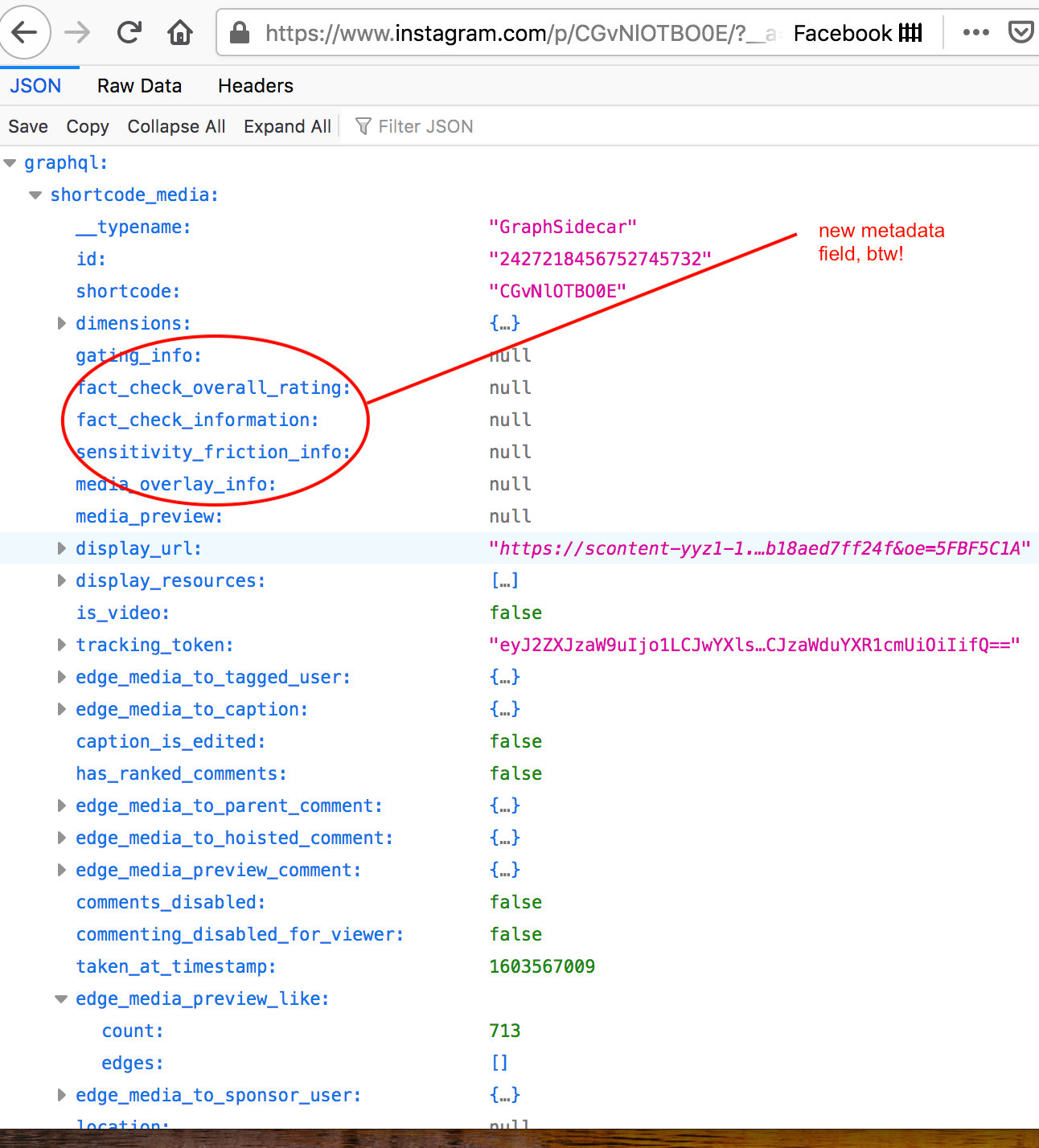

## Instagram

The magic of `?__a=1`

Compare: [https://www.instagram.com/p/CGvNlOTBO0E/](https://www.instagram.com/p/CGvNlOTBO0E/)

with: [https://www.instagram.com/p/CGvNlOTBO0E/?__a=1](https://www.instagram.com/p/CGvNlOTBO0E/?__a=1) .... use Firefox

Note:

Instagram uses a graph database under the hood. Every image, every post, every user interaction and so on is just a node to which more edges can be connected as more people like or interact with a post or location.

---

Note:

They also can add completely new metadata with ease .Part of 'not seeing' in the way I'm describing means not only expressing image data as richly as I can, but also associating it with its metadata. Instagram doesn't like people to see this metadata except in ways that they control.

---

*Notebook 1*

Let's collect some Instagram images and metadata

Use [this notebook](https://colab.research.google.com/drive/1eF76HOujdxkhvYWRAh4ExUaE6uAwq6c-?usp=sharing)

If you have python/anaconda/miniconda installed, you can run the code locally; just copy the python from the notebook to your terminal/command prompt.

---

One thing you could then do: Text analysis or similar on the metadata.

+ This is what we did in our first piece on the trade in human remains ([available to read OA here](https://intarch.ac.uk/journal/issue45/5/index.html))

+ Gives you insight into the *culture* and discourse around a particular kind of visual identity maybe

---

*Notebook 2*

Visual similarity following Douglas Duhaime's [excellent blog post](https://douglasduhaime.com/posts/identifying-similar-images-with-tensorflow.html)

Use [this notebook](https://colab.research.google.com/drive/1EY-AwgcO7jjqwop28X441jMY4MqbYAGE?usp=sharing)

---

In work led by Katherine Davidson, PhD student at Carleton, we're looking at these similarities as a kind of weighted network over time, to measure flows of visual influence in the bonetrade. Stay tuned!

Note:

Here, the idea is that we turn our images into vectors using the pre-trained neural network 'Inception3'. We throw out the last layer, the 'classification', and just measure the distance between one vector and another acros 2048 dimensions.

---

*Notebook 3: [PixPlot](https://dhlab.yale.edu/projects/pixplot/)*

Use [this notebook](https://colab.research.google.com/drive/1HjiAGMRQSqIlMnu-KF6fd7loL2oFeQDg?usp=sharing) to run PixPlot; then download the 'output' folder to your own machine; at the terminal in that folder `python -m http.server 5000`

Note:

Duhaime and collaborators have packaged the visual similarity routine up with an image browser. With one line of code, you can have similarity expressed and visualized according to various clustering algorithms, and view these patterns at a distance.

---

*Notebook 4: Scene descriptions with Azure tagging*

+ [Documentation here](https://docs.microsoft.com/en-us/rest/api/cognitiveservices/computervision/describeimage/describeimage)

+ Notebook [here](https://colab.research.google.com/drive/1bqN_kAespXPsWaUr1wjczCLKIC8UFfHs?usp=sharing); note you'll need to register for Azure for API key, which you put into the appropriate line in the code

---

<small> You can see what we did with that code in Graham, S.; Huffer, D.; Blackadar, J. Towards a Digital Sensorial Archaeology as an Experiment in Distant Viewing of the Trade in Human Remains on Instagram. Heritage 2020, 3, 208-227. [link](https://www.mdpi.com/2571-9408/3/2/13) </small>

---

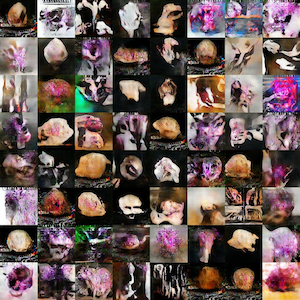

Hallucinating Images

Dev Nag, https://medium.com/@devnag/generative-adversarial-networks-gans-in-50-lines-of-code-pytorch-e81b79659e3f

---

What the Machine Sees in the Bone Trade

<small>I cheated; I don't have enough GPU power for a GAN, so I followed [this tutorial](https://ml4a.github.io/classes/itp-F18/06/#) using the [Paperspace service](https://ml4a.github.io/classes/itp-F18/06/#)</small>

---

<iframe src="https://giphy.com/embed/lD76yTC5zxZPG" width="480" height="352" frameBorder="0" class="giphy-embed" allowFullScreen></iframe>

## That's all folks!

<small>_title slide image by Victor Talashuk [unsplash.com](https://unsplash.com/photos/bhoj9tHlsiY)_</small>

---

## links to notebooks for future reference:

+ [instagram-scraper example](https://colab.research.google.com/drive/1eF76HOujdxkhvYWRAh4ExUaE6uAwq6c-?usp=sharing)

+ [nearest-neighbours to network](https://colab.research.google.com/drive/1EY-AwgcO7jjqwop28X441jMY4MqbYAGE?usp=sharing)

+ [PixPlot](https://colab.research.google.com/drive/1HjiAGMRQSqIlMnu-KF6fd7loL2oFeQDg?usp=sharing)

+ [Azure tagging](https://colab.research.google.com/drive/1bqN_kAespXPsWaUr1wjczCLKIC8UFfHs?usp=sharing)

{"metaMigratedAt":"2023-06-15T14:41:40.070Z","metaMigratedFrom":"YAML","title":"Ways of Seeing","breaks":true,"description":"View the slide with \"Slide Mode\".","contributors":"[{\"id\":\"03fa7424-1573-4654-9bab-88caacb1209d\",\"add\":10728,\"del\":3720}]"}