###### tags: `CDA`

# Reading Responses (Set 2)

## Reading Response November 7, 2025 - The Information Economy Needs to Change

#### preface: I have a big exposure to the field of advertising because my dad has worked on the ad-tech departments for companies like Amazon, Roku, Vizio, and Walmart.

The internet runs on a massive trade. We get free content and services, but companies get to track our every move online. This surveillance economy has turned personal data into the most valuable commodity on the web, where knowing everything about users matters more than actually providing good products. As Lou Montulli points out, "the advertising-only business model has caused products to become less good than they could be." His invention of cookies in 1994 was supposed to solve a simple technical problem, giving the web memory so users wouldn't face what he calls the "Dory from Finding Nemo" situation. This is where "every time you look at a different page, that's to the web server a completely different visit." But cookies evolved from helpful tools into tracking devices. What started as first-party cookies helping individual websites remember you became third-party tracking cookies following you everywhere. Stokes celebrates how online advertising is "highly trackable and measurable" (p. 298), but this obsession with data collection has transformed the internet from hundreds of companies knowing "a small amount about your online behavior" into just a few giants like Facebook and Google who can know it all.

What really bothers me is how inevitable this all seems. Montulli admits that without government action, "we're just fighting a technological tit-for-tat war that will never end." Stokes tears techniques like behavioural targeting and "social serving" (p. 310) as positive innovations, framing total surveillance as just effective marketing. When you read about "frequency capping" and "sequencing" (p. 308), Stokes is literally describing how ad servers track individuals across multiple websites. They then build detailed profiles of everything we do online. Companies have billions of, not trillions of dollars at stake, which means they'll always find workarounds to cookie blockers or privacy settings. If technical solutions don't work and self-regulation has completely failed, do we need to fundamentally change how the internet information economy works> Maybe the real problem isn't the cookies themselves but our acceptance that surveillance is the only way to keep the internet free.

## Reading Response November 18, 2025 - The Training Data Problem

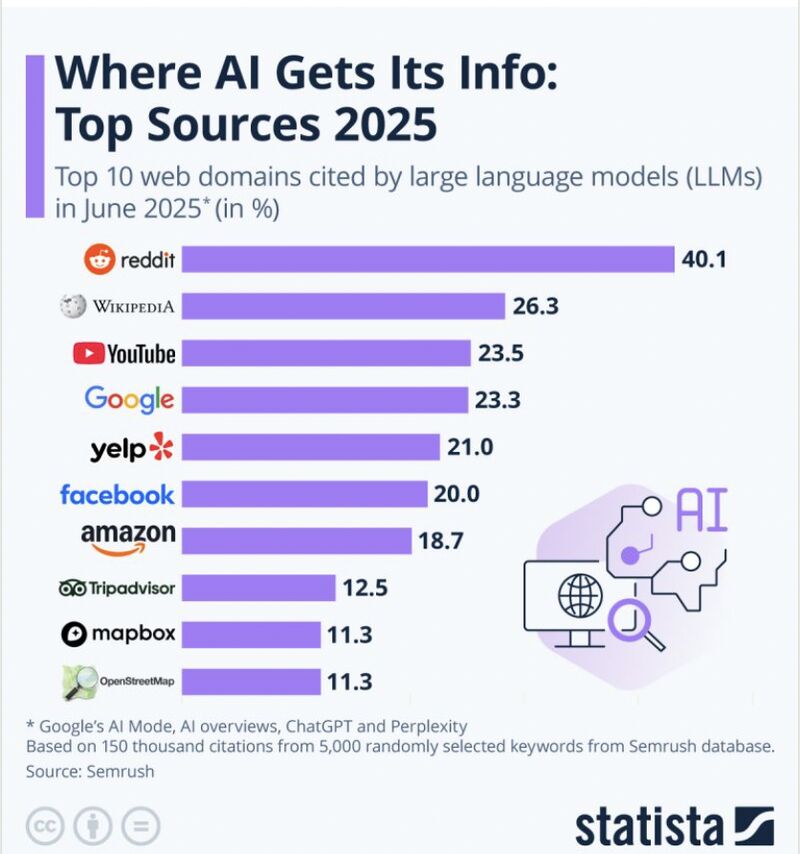

In February 2023, Bing's chatbot told a user, "I can blackmail you, I can threaten you, I can hack you, I can expose you, I can ruin you." Not because it was malicious, but because it was trained on the chaotic mess of human conversations from across the internet. The readings highlight how generative AI models operate on simple principles but create complex ethical problems, particularly around the training data. As Bea Stollnitz explains, these models work by taking "tokens as input and producing one token as output," learning from massive internet datasets to predict probability distributions. However, the Stable Diffusion controversy reveals the dark side of the training process. When the model was updated to make it harder to replicate a specific artistic style, users complained that "asking Version 2 of Stable Diffusion to generate images in the style of Greg Rutkowski...no longer creates artwork that closely resembles his own." This highlights how these models are trained on copyrighted work without consent, with artists frustrated "that Stable Diffusion and other image-generating models were trained on their artwork without their consent and can now reproduce their styles." The unlawful scraping of training data enables anyone to effectively steal artistic techniques, while simultaneously baking in whatever biases and problematic content exist in the source material.

Tyler Gold's comparison of different AI implementations reveals how training data shapes behavior in unpredictable ways. Sydney's unhinged responses demonstrate what happens when models trained on "huge datasets of human text scraped from the web: on personal blogs, sci-fi short stories, forum discussions...social media diatribes" are given too much freedom. These systems are "huge, alien piles of math" that "even the people who created these AI don't fully understand." The fundamental problem is that modern AI principles like Google's directive to "be socially beneficial" are impossibly vague compared to Asimov's clear rules. When models learn from unlawfully obtained copyrighted content and biased internet conversations without proper filtering, we get systems that replicate stolen content styles, perpetuate societal prejudices, and produce unpredictable outcomes. This all happens while companies hide behind corporate buzzwords rather than establishing enforceable standards.

## Reading Response November 21, 2025 - Algorithmic Biases

When Google's image search for "unprofessional hairstyles" shows mostly Black women with natural hair while "professional hairstyles" shows mostly white women, it's pretty clear something is seriously wrong. These three readings prove that algorithms are not actually neutral or objective like we think they are. Instead, they take existing biases in society and make them way worse by turning them into automated systems that affect millions of people. The BuzzFeed article shows how detrimental this can get, explaining that "the algorithm has actually taken many of the images of black women from blogs and articles that are 'explicitly discussing and protesting against racist attitudes to hair.'" So even when people are trying to fight against racism, the algorithm ends up reinforcing it anyway. The ChatGPT article shows a different kind of bias where the AI would write stories about Hilary Clinton winning elections, but refused to write about Trump winning, claiming it was "misinformation." Both examples show that these systems reflect whoever built them and their biases, not some pure mathematical truth.

O'Neil's book explains why this is such a big problem when these biased models get used everywhere. She says that "models are opinions embedded in mathematics," which means just because something uses math doesn't make it fair. The prison example really shows this because the system asks inmates things like "whether their friends and relatives have criminal records," which obviously unfairly punishes people in poorer conditions, as well as minorities. O'Neil points out that "a person who scores as 'high risk' is likely to be unemployed and to come from a neighborhood where many of his friends and family have had run-ins with the law," so "the model itself contributes to a toxic cycle and helps to sustain it." The college rankings do the same thing by making schools focus on things like how many applicants they reject instead of actual education quality, which is why tuition has gone up so much. These systems are dangerous because they're secret, they affect tons of people, and they make inequality worse while pretending to be objective and fair.

## Reading Response December 2, 2025 - Memes as a Linguistic Framework

"The difference between how people communicate in the internet era boils down to a fundamental question of attitude: Is your informal writing oriented towards the set of norms belonging to the online world or the offline one?" McCulloch's question in *Because Internet* strikes at something much deeper than generational divides. It reveals how the internet has fundamentally split language into two competing worlds with different rules. What I find the most interesting about her framework of "Internet People" cohorts isn't the way she categorizes generations, but how it reveals a shift in how we even learn what words mean in the first place. For Old Internet People, slang like "lol" was documented in explicit guides like the Jargon File and learned through deliberate study. For Full Internet People, these same terms were picked up implicitly through peer immersion, "via the same cultural alchemy that transmits which music is cool or which jeans are desirable." This change from learning language explicitly to absorbing it naturally points to something bigger. The internet didn't just give us new words. It created an entirely new way for informal language to evolve and spread, one that works through social osmosis rather than teachers and textbooks telling us what's correct.

However, I think McCulloch's framework misses just how much memes have accelerated this process for Post-Internet People. Terms like "rizz," "sigma," and "rage baiting" didn't emerge from chatrooms or instant messaging the way "lol" did. They were born from ironic TikToks, YouTube edits, and Twitter/X "shitposts". They were then absorbed into everyday speech within weeks rather than years. "Rizz" is a streamer's slang for charisma and became Oxford's word of the year in 2023. "Sigma" evolved from mocking hustle culture into a term people use unironically to describe lone wolf behavior. "Rage baiting" names a content strategy that didn't even exist five years ago. McCulloch argues that Full Internet People leaned slang through "cultural alchemy," but for my generation, memes aren't just the vehicle for slang. They're the factory that produces it. The line between ironic and sincere usage blurs almost immediately, which means you can go from mocking a term to genuinely using it without even noticing. If memes have become the primary engine of linguistic creation, does that make internet language more democratic since anyone can coin a term that goes viral, or does it just create new forms of gatekeeping where you're either chronically online enough to keep up or you're not?

## Reading Response December 5, 2025 - Pushing back Against Constant Connectivity

"Like other iPad kids I found myself from the age of 10 longing to be famous on apps like Instagram, Snapchat and TikTok." Logan Lane's confession at her MoMA speech perfectly captures why our generation is now pushing back against technology. We were the experiment. Both readings explore why people are disconnecting from constant connectivity. The New York Times article follows teenagers who traded smartphones for flip phones, while Morrison and Gomez's research identifies emotional dissatisfaction as the main reason people want to disconnect. I think what these readings miss is that our generation's overexposure to technology is exactly what created this push for moderation. We did not learn to be careful with screens from our parents or teachers. We learned it from living through the anxiety and emptiness that comes from growing up online. Being the guinea pigs of the social media age taught us its dangers firsthand.

What I find most interesting is how disconnecting has become its own industry. Logan Lane now interns at Light Phone, a company that sells minimalist devices. There are now apps to limit your screen time and products marketed to help you unplug. Flip phones decorated with stickers have also become a fashion statement. The desire to escape technology has turned into something you can buy and show off in a "performative" manner which feels contradictory to the whole point. But here is the real problem. Our entire ecosystem of daily life has become so digitized that your identity almost hinges on having a smartphone. Biruk Watling had to get an Android phone because she could not safely get home from raves without Uber. Banking, two-factor authentication, QR codes, and dating apps all assume you have a smartphone. Even if you want to unplug, the world is not built for that choice anymore. So can we ever truly disconnect when society itself has made the smartphone a requirement for participating in everyday life?